Meta AI Insights Gives Parents Visibility into Teen Conversations

Edited by Mursal Rahman — April 28, 2026 — Social Media

This article was written with the assistance of AI.

References: about.fb & digitaltrends

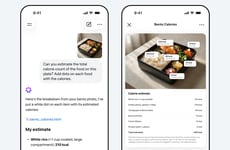

Meta’s parental AI supervision tools, including the new Insights tab for Teen Accounts, introduce a more transparent way for families to engage with artificial intelligence. By allowing parents to view the topics their teens discuss with Meta AI over a seven-day period, the feature brings greater visibility into how young users interact with AI systems. It also includes safeguards such as alerts for sensitive topics and expert-backed conversation prompts, helping parents guide discussions around digital behavior.

This approach reflects a growing demand for accountability and safety in AI-driven platforms, especially for younger audiences. By embedding oversight tools directly into its ecosystem, Meta strengthens trust with users and regulators while differentiating its services in a competitive landscape. It also sets a precedent for other tech companies to incorporate similar controls, potentially shaping industry standards around responsible AI usage and family-centered digital experiences.

Image Credit: Meta

This approach reflects a growing demand for accountability and safety in AI-driven platforms, especially for younger audiences. By embedding oversight tools directly into its ecosystem, Meta strengthens trust with users and regulators while differentiating its services in a competitive landscape. It also sets a precedent for other tech companies to incorporate similar controls, potentially shaping industry standards around responsible AI usage and family-centered digital experiences.

Image Credit: Meta

How parents want AI chat oversight for teens

Helps decide what kind of AI-safety coverage and product guides to prioritize: parent controls, teen privacy expectations, and which oversight features drive adoption.

1 / 3

When was the last time you changed a teen's app privacy or safety settings?

2 / 3

If available, would you use a weekly summary of AI chat topics for your teen?

3 / 3

Which AI safety feature would make you most likely to turn on parent controls?

Trend Themes

-

Family-centric AI Transparency — Greater visibility into AI interactions within family accounts creates potential for platforms to embed trust signals and provenance features that reshape user expectations for accountable AI.

-

AI Conversation Monitoring — Real-time and retrospective monitoring of teen-AI exchanges introduces possibilities for adaptive moderation systems that balance privacy with contextual safety oversight.

-

Expert-guided Digital Wellness — Integration of expert-backed prompts and alerts alongside AI chat interfaces suggests new models for blended human-AI guidance that influence behavioral and educational outcomes for youth.

Industry Implications

-

Social Media Platforms — Platforms embedding parental supervision tools can differentiate through built-in compliance and family trust features that may redefine moderation and product design priorities.

-

Edtech and E-learning — Learning environments incorporating AI conversation insights could evolve toward personalized curricula that account for students' digital literacy and emotional well-being.

-

Child Safety and Privacy Services — Specialized services offering privacy-preserving oversight and audit trails for minors' AI interactions are positioned to transform regulatory compliance and risk management offerings.

9.7

Score

Popularity

Activity

Freshness