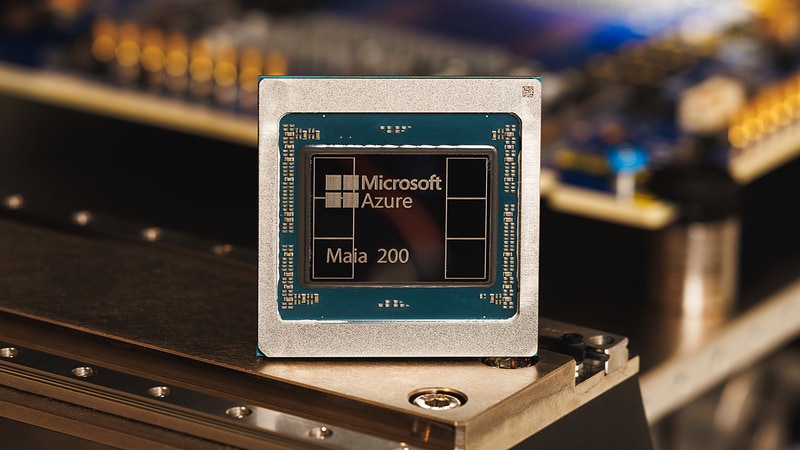

Microsoft’s Maia 200 Targets Faster, More Efficient AI Inferenc

Edited by Colin Smith — January 27, 2026 — Tech

This article was written with the assistance of AI.

References: news.microsoft & techcrunch

Microsoft’s Maia 200 is a new in-house accelerator chip built to handle large-scale AI inference workloads across its cloud. Positioned as a follow-up to the Maia 100 introduced in 2023, the chip is designed to run today’s largest AI models more quickly while using power more efficiently. With over 100 billion transistors and double-digit petaflop performance at low precision, it focuses specifically on the compute stage where trained models generate outputs.

The Maia 200 delivers over 10 petaflops of 4-bit performance and around 5 petaflops at 8-bit, representing a sizable jump over Microsoft’s earlier silicon. The company reported that individual Maia 200 nodes are already powering internal systems, including models from its Superintelligence team and Copilot services. Alongside the hardware, Microsoft has opened access to a Maia 200 software development kit, inviting developers, academic researchers, and advanced AI labs to tune workloads for the new architecture.

For AI builders, Maia 200 supports a growing shift toward custom data center chips that address inference costs, which have become a major operating expense as models scale. By offloading work from general-purpose GPUs, the chip enables more predictable capacity and potentially lower energy consumption for continuous AI services. This reinforces a broader trend in cloud infrastructure where major platforms design vertically integrated stacks, pairing proprietary hardware with specialized software to streamline AI deployment at scale.

Image Credit: Microsoft

The Maia 200 delivers over 10 petaflops of 4-bit performance and around 5 petaflops at 8-bit, representing a sizable jump over Microsoft’s earlier silicon. The company reported that individual Maia 200 nodes are already powering internal systems, including models from its Superintelligence team and Copilot services. Alongside the hardware, Microsoft has opened access to a Maia 200 software development kit, inviting developers, academic researchers, and advanced AI labs to tune workloads for the new architecture.

For AI builders, Maia 200 supports a growing shift toward custom data center chips that address inference costs, which have become a major operating expense as models scale. By offloading work from general-purpose GPUs, the chip enables more predictable capacity and potentially lower energy consumption for continuous AI services. This reinforces a broader trend in cloud infrastructure where major platforms design vertically integrated stacks, pairing proprietary hardware with specialized software to streamline AI deployment at scale.

Image Credit: Microsoft

Trend Themes

-

Custom AI Inference Chips — The development of custom AI inference chips, like Microsoft's Maia 200, exemplifies a move towards specialized hardware to optimize large-scale AI models and reduce operational costs.

-

Vertically Integrated Cloud Infrastructure — Vertically integrated cloud infrastructure is gaining traction as platforms like Microsoft create proprietary hardware-software stacks to enhance AI deployment efficiency.

-

Eco-efficient AI Systems — The trend towards eco-efficient AI systems is underscored by chips such as the Maia 200, which aim to decrease energy consumption while maintaining high performance.

Industry Implications

-

Cloud Computing — The cloud computing industry is increasingly focusing on the integration of cutting-edge AI technologies to facilitate large-scale model processing with improved efficiency.

-

Semiconductors — Innovation in the semiconductor industry is driven by a demand for advanced AI inference chips designed to support intricate model computations while being power-efficient.

-

Artificial Intelligence — The artificial intelligence sector sees transformative growth with the introduction of chips like the Maia 200, designed to handle robust computations and streamline AI services.

5.3

Score

Popularity

Activity

Freshness