Microsoft Ships MAI-Transcribe-1, MAI-Voice-1 and MAI-Image-2

Edited by Colin Smith — April 15, 2026 — Tech

This article was written with the assistance of AI.

References: thenextweb

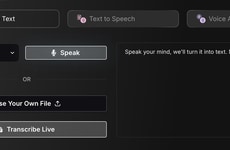

Microsoft released three in-house MAI models — MAI-Transcribe-1, MAI-Voice-1 and MAI-Image-2 — via Microsoft Foundry, developed by the MAI Superintelligence team led by Mustafa Suleyman and designed to provide integrated speech, voice and image capabilities. MAI-Transcribe-1 delivered low word-error rates across 25 languages; MAI-Voice-1 generates high-quality speech and supports custom voices from seconds of audio; MAI-Image-2 ranked highly on text-to-image leaderboards.

The models run on Microsoft infrastructure and carry no OpenAI branding. The trio includes performance and efficiency gains: faster transcription, subsecond audio generation on a single GPU and enterprise collaborations with partners such as WPP. For enterprises, the MAI family creates a self-contained multimodal stack that reduces dependency on third-party models and simplifies deployment across Microsoft cloud services, signaling a shift toward vertically integrated AI suites in the enterprise market.

Image Credit: Below the Sky / Shutterstock.com

The models run on Microsoft infrastructure and carry no OpenAI branding. The trio includes performance and efficiency gains: faster transcription, subsecond audio generation on a single GPU and enterprise collaborations with partners such as WPP. For enterprises, the MAI family creates a self-contained multimodal stack that reduces dependency on third-party models and simplifies deployment across Microsoft cloud services, signaling a shift toward vertically integrated AI suites in the enterprise market.

Image Credit: Below the Sky / Shutterstock.com

Enterprise use of integrated voice, speech, and image AI

Helps decide whether to try or switch to a single-provider multimodal AI stack in the next 1–2 weeks, and which use case to prioritize first (transcription, voice, or image generation).

1 / 3

When was the last time you used AI for speech, voice, or images at work?

2 / 3

How likely are you to try a single platform for speech, voice, and images soon?

3 / 3

Which AI capability would you be more likely to pilot in the next 2 weeks?

Trend Themes

-

Enterprise-owned Multimodal Stacks — A shift toward vendor-controlled multimodal AI stacks that bundle speech, voice and image models promises to reduce reliance on third-party providers and consolidate end-to-end capabilities within single cloud ecosystems.

-

Few-second Custom Voice Synthesis — The ability to generate high-fidelity custom voices from seconds of audio enables personalized voice assets at scale, opening possibilities for brand-specific synthetic voices and scalable localization.

-

Performance-driven Model Efficiency — Subsecond audio generation on a single GPU and faster multilingual transcription demonstrate a push for models that maximize throughput and cost-efficiency for real-time enterprise workloads.

Industry Implications

-

Cloud Infrastructure — Integrated MAI models hosted on a major cloud provider point toward vertically integrated offerings that could shift enterprise procurement toward platform-native AI services.

-

Advertising and Media — High-quality custom voice synthesis and rapid image generation present new paths for scalable ad personalization, voice branding and automated multimedia content production.

-

Localization and Transcription Services — Low word-error multilingual transcription capabilities and fast speech generation suggest opportunities to streamline localization pipelines and reduce turnaround times for global content.

6.3

Score

Popularity

Activity

Freshness