LocalAPI.ai Lets Users Run and Manage AI Models Directly in Browser

Ellen Smith — February 13, 2026 — Tech

References: github

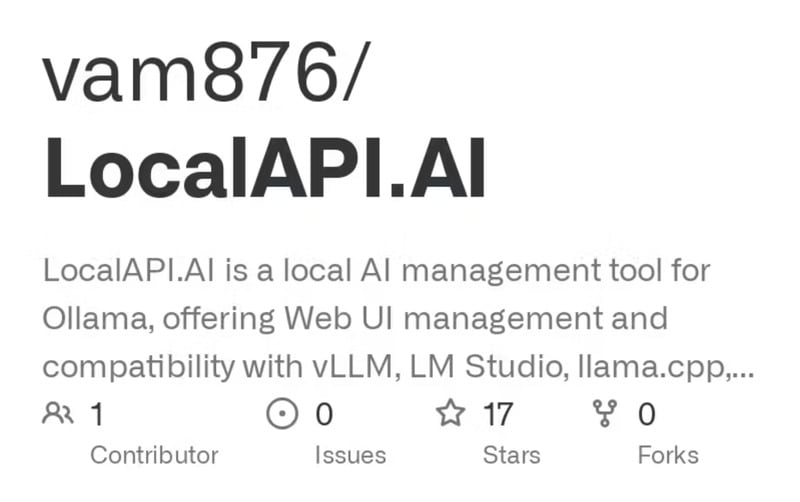

LocalAPI.ai is a browser-based platform that enables users to invoke and manage local AI models without complex setup. The tool is designed to simplify AI model deployment, allowing a single HTML file to run and interface with supported models, including vLLM, LM Studio, and llama.cpp.

It provides a lightweight approach for developers and businesses to test, integrate, and experiment with AI models locally, reducing dependency on cloud infrastructure. From a business perspective, LocalAPI.ai addresses challenges related to accessibility, deployment speed, and operational cost for AI experimentation. By enabling local execution and management, the platform supports rapid prototyping, data privacy, and control over AI workflows. Its compatibility with multiple AI frameworks broadens its usability, providing a versatile option for organizations seeking lightweight, scalable AI solutions in-browser.

Image Credit: LocalAPI.ai

It provides a lightweight approach for developers and businesses to test, integrate, and experiment with AI models locally, reducing dependency on cloud infrastructure. From a business perspective, LocalAPI.ai addresses challenges related to accessibility, deployment speed, and operational cost for AI experimentation. By enabling local execution and management, the platform supports rapid prototyping, data privacy, and control over AI workflows. Its compatibility with multiple AI frameworks broadens its usability, providing a versatile option for organizations seeking lightweight, scalable AI solutions in-browser.

Image Credit: LocalAPI.ai

Trend Themes

-

In-browser Model Execution — Local inference within the browser reduces latency and preserves data privacy, creating potential for real-time AI experiences without cloud roundtrips.

-

Local-first AI Deployment — Running models on-device minimizes operational costs and dependency on cloud providers, opening avenues for cost-sensitive and offline-capable AI products.

-

Framework-agnostic Interoperability — Compatibility with multiple runtimes and model formats simplifies integration complexity and encourages modular AI toolchains across diverse environments.

Industry Implications

-

Enterprise Software — On-premise browser-based models support tighter data governance and faster prototyping workflows, which can reshape how enterprises embed AI into internal applications.

-

Healthcare Technology — Local execution of models in clinical settings reduces patient data exposure and latency, enabling more privacy-preserving diagnostic and decision-support tools.

-

Edge Device Manufacturing — Embedding browser-capable AI runtimes in consumer and industrial devices enables smarter, low-latency features that rely less on continuous cloud connectivity.

4.3

Score

Popularity

Activity

Freshness