Focal AI Routes Prompts To The Best AI Model In Real Time

Ellen Smith — April 23, 2026 — Tech

References: focalai.co

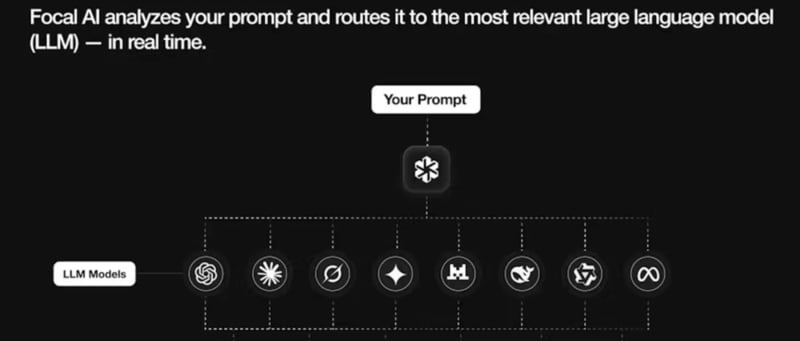

Focal AI is a routing-based interface that connects user prompts to multiple large language models (LLMs) through a single access point. The system analyses incoming requests and determines which underlying AI model is most suitable for the task, such as coding, content generation, data analysis, or research-oriented queries.

Instead of requiring users to manually select between different models, Focal AI automates this selection process in real time. The platform is designed to simplify access to a range of AI capabilities by acting as an intermediary layer between users and model providers. It is typically used in workflows where different types of tasks require specialised model strengths. Focal AI reflects broader developments in AI orchestration tools, where abstraction layers are introduced to improve usability, efficiency, and task-specific model allocation within multi-model environments.

Image Credit: Focal AI

Instead of requiring users to manually select between different models, Focal AI automates this selection process in real time. The platform is designed to simplify access to a range of AI capabilities by acting as an intermediary layer between users and model providers. It is typically used in workflows where different types of tasks require specialised model strengths. Focal AI reflects broader developments in AI orchestration tools, where abstraction layers are introduced to improve usability, efficiency, and task-specific model allocation within multi-model environments.

Image Credit: Focal AI

Trend Themes

-

Model-oriented Routing — Routing user prompts to the most suitable model enables dynamic optimization of accuracy, latency, and cost across heterogeneous AI providers.

-

Real-time Model Selection — Real-time analysis of requests and automated model switching can create granular performance tiers tailored to task complexity and SLA requirements.

-

AI Orchestration Abstraction — An intermediary abstraction layer that standardizes interfaces to multiple LLMs can foster marketplaces for plug-and-play specialized models and policy engines.

Industry Implications

-

Enterprise Software — Integrated routing layers in enterprise apps could centralize governance, observability, and cost controls for distributed AI workloads.

-

Cloud Services — Cloud providers offering orchestration-as-a-service may reconfigure billing models and data residency guarantees around per-model routing decisions.

-

Education Technology — Adaptive learning platforms that route pedagogical queries to subject-specific models can enable personalized instruction pipelines with measurable learning outcomes.

3.5

Score

Popularity

Activity

Freshness