Roblox Applies AI to Analyze Full Gameplay Interactions

mursal rahman — March 31, 2026 — Tech

References: about.roblox

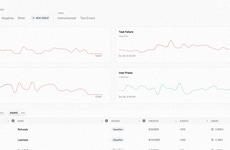

Roblox’s AI scene monitoring tools introduce a more advanced approach to content moderation by analyzing entire in-game environments rather than isolated elements. Instead of reviewing single assets like text or avatars, the system evaluates how multiple components interact in real time, allowing it to detect harmful behavior that might otherwise go unnoticed. This shift enables more accurate identification of problematic scenarios while minimizing unnecessary disruptions to gameplay.

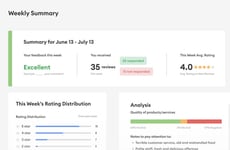

This approach supports safer digital ecosystems, which is critical for retaining users and attracting younger audiences. It also empowers creators with actionable insights through dashboard analytics, helping them refine game design and reduce moderation risks. For platforms, this reduces reliance on manual review while improving scalability. More broadly, this signals a move toward proactive, context-aware safety systems that can be applied across gaming, social media, and other user-generated content platforms.

Image Credit: Roblox

This approach supports safer digital ecosystems, which is critical for retaining users and attracting younger audiences. It also empowers creators with actionable insights through dashboard analytics, helping them refine game design and reduce moderation risks. For platforms, this reduces reliance on manual review while improving scalability. More broadly, this signals a move toward proactive, context-aware safety systems that can be applied across gaming, social media, and other user-generated content platforms.

Image Credit: Roblox

In-game safety tools that scan full gameplay context

Informs decisions on adopting AI-driven moderation tools, switching safety settings, and prioritizing creator features in the next 1–2 weeks.

1 / 3

When was the last time you changed safety/privacy settings in a game app?

2 / 3

If available, how likely are you to enable AI that monitors full gameplay context?

3 / 3

Which safety feature would you be more likely to use?

Trend Themes

-

Context-aware Moderation — By evaluating whole environments and relationships between assets, moderation systems enable nuanced detection of harmful scenarios that evade element-by-element filters.

-

Real-time Interaction Analysis — The continuous assessment of live gameplay interactions allows identification of emergent risks and coordinated abuse patterns as they develop.

-

Creator-focused Safety Insights — Dashboards that synthesize contextual moderation data provide creators with visibility into design elements and social dynamics that correlate with safety incidents.

Industry Implications

-

Online Gaming Platforms — Platforms hosting multiplayer experiences can shift from manual moderation to scalable AI systems that preserve play flow while reducing exposure to harmful interactions.

-

Social Media Networks — Networks driven by complex user interactions can benefit from scene-level analysis to detect coordinated misinformation, harassment, or context-dependent policy violations.

-

User-generated Content Marketplaces — Marketplaces relying on diverse asset combinations can use environment-aware monitoring to surface risk-prone listings and composition patterns that traditional checks miss.

5

Score

Popularity

Activity

Freshness